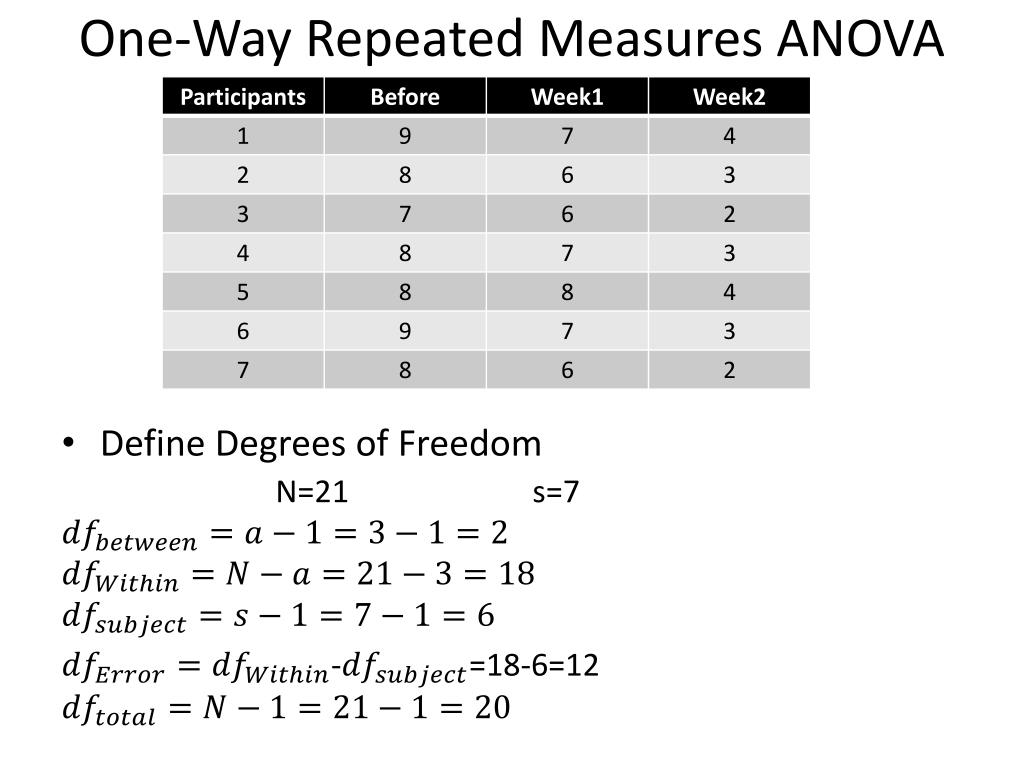

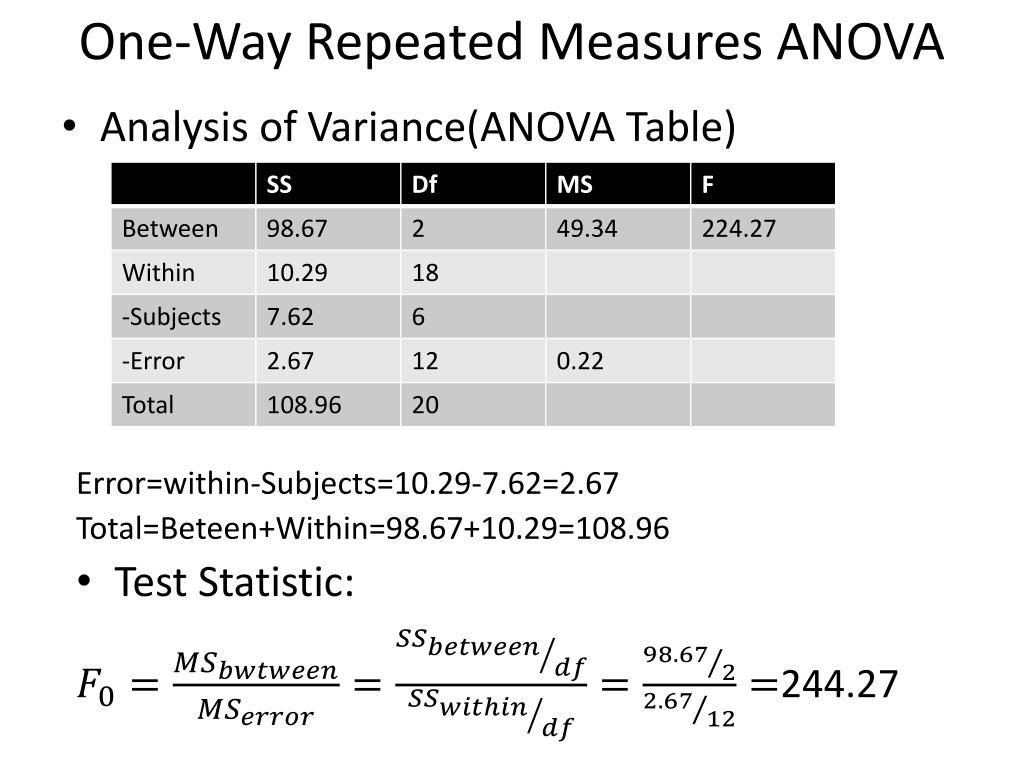

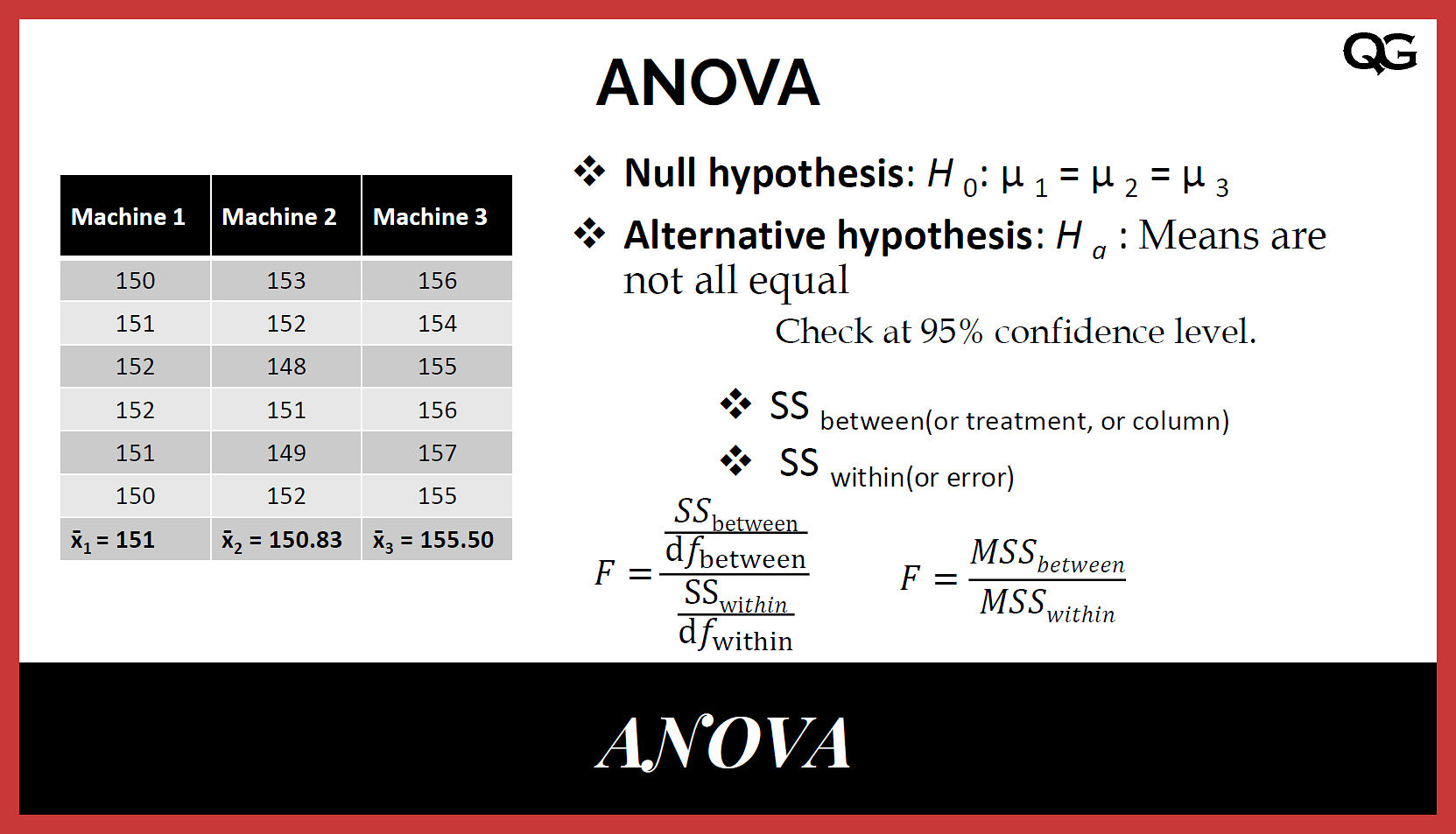

That is, the error degrees of freedom is 14−2 = 12. The degrees of freedom add up, so we can get the error degrees of freedom by subtracting the degrees of freedom associated with the factor from the total degrees of freedom.Because m = 3, there are m−1 = 3−1 = 2 degrees of freedom associated with the factor.Because n = 15, there are n−1 = 15−1 = 14 total degrees of freedom.Now, having defined the individual entries of a general ANOVA table, let's revisit and, in the process, dissect the ANOVA table for the first learning study on the previous page, in which n = 15 students were subjected to one of m = 3 methods of learning: Therefore, we'll calculate the P-value, as it appears in the column labeled P, by comparing the F-statistic to an F-distribution with m−1 numerator degrees of freedom and n− m denominator degrees of freedom. When, on the next page, we delve into the theory behind the analysis of variance method, we'll see that the F-statistic follows an F-distribution with m−1 numerator degrees of freedom and n− m denominator degrees of freedom. That is, the F-statistic is calculated as F = MSB/MSE. Because we want to compare the "average" variability between the groups to the "average" variability within the groups, we take the ratio of the Between Mean Sum of Squares to the Error Mean Sum of Squares. The F column, not surprisingly, contains the F-statistic. The Error Mean Sum of Squares, denoted MSE, is calculated by dividing the Sum of Squares within the groups by the error degrees of freedom.The Mean Sum of Squares between the groups, denoted MSB, is calculated by dividing the Sum of Squares between the groups by the between group degrees of freedom.The mean squares ( MS) column, as the name suggests, contains the "average" sum of squares for the Factor and the Error: We'll soon see that the total sum of squares, SS(Total), can be obtained by adding the between sum of squares, SS(Between), to the error sum of squares, SS(Error). As the name suggests, it quantifies the total variability in the observed data. SS(Total) is the sum of squares between the n data points and the grand mean.It quantifies the variability within the groups of interest. Again, as we'll formalize below, SS(Error) is the sum of squares between the data and the group means.As the name suggests, it quantifies the variability between the groups of interest.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed